|

Getting your Trinity Audio player ready...

|

This post is a guest contribution by George Siosi Samuels, managing director at Faiā. See how Faiā is committed to staying at the forefront of technological advancements here.

I’ve been diving into how artificial intelligence (AI), especially the rise of large language models (LLMs) and probabilistic thinking, is changing how we build software. It’s a full-on paradigm shift, and it’s got me thinking: Are we finally catching up to the way humans naturally think, or are we paving a new path altogether?

Let’s unpack this with a nod to Manus AI, JSON’s fading relevance, XML’s quiet comeback, and where LLMs like Claude

might land in the next 12–18 months. Oh, and let’s throw scalable blockchains into the mix since they will become increasingly important in the AI space over the next five years.

AI’s probabilistic thinking: A mirror to human nature

Technically, humans are probabilistic thinkers at the core. We make decisions based on incomplete information, gut feelings, and patterns—think of how beliefs, cultures, and values act as our own “guardrails,” steering us through uncertainty. However, we have become used to setting our world up in more deterministic ways because it provides us with more certainty, even if illusory.

AI’s evolution, particularly with LLMs, is starting to reflect this. Probabilistic models don’t need pristine, structured data to shine—they thrive on messiness, inference, and context, much like we do in real life. But it certainly helps if the data itself is “clean” (think organic food over fast food but for data).

This is a loud and clear signal: We need to build applications that leverage AI’s probabilistic strengths, not fight against them with rigid, deterministic frameworks.

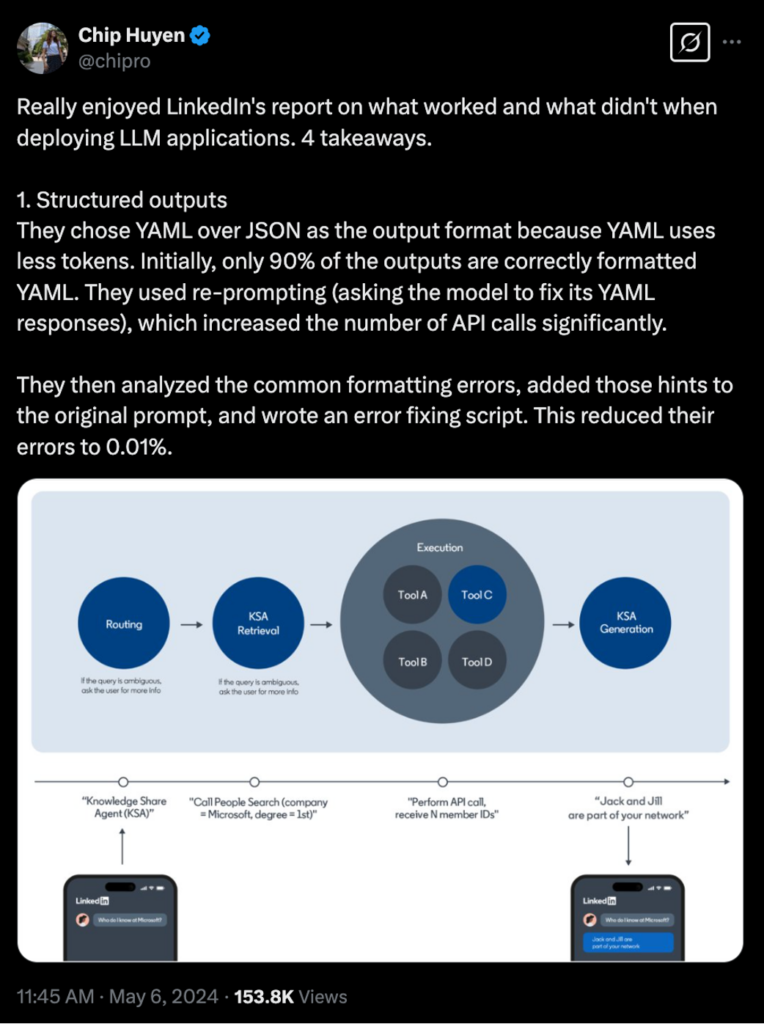

Take JSON parsing, for instance. For years, we’ve leaned on structured formats like JSON (and XML before it) to wrangle data for apps—clean, predictable, and human-readable. But I’ve found it’s becoming less ideal long-term. Why? Probabilistic AI models, like those powering ChatGPT, Claude, and more recently, Manus, increasingly handle raw, noisy data through inference, cutting out unnecessary token spend and complexity.

Cleaning data or forcing it into JSON can actually slow things down, adding overhead when the model could’ve just figured it out. It reminds me of how we used to think search needed catalog-based systems (like Yahoo) versus the intent-based magic of Google (NASDAQ: GOOGL)—both a cultural and paradigm shift were required back then, and we’re in a similar moment now.

XML’s quiet comeback: A nod to structure, reimagined

Speaking of XML, it’s quietly creeping back into the conversation, and I’m intrigued. While JSON dominates for its simplicity, XML’s richer tagging and hierarchical structure might find new life in AI-driven apps. Why? AI’s probabilistic nature doesn’t mean we ditch structure entirely—just balance flexibility more with context.

XML’s ability to encode layouts, metadata, and relationships (think merged cells in tables, as Manus’ approach highlights) could pair beautifully with AI’s inference, especially for tasks like web scraping or document processing. It’s not about returning to XML’s 2000s heyday but reimagining it as a complementary tool for AI to parse and reason over rather than a rigid cage.

I think we’re going to see more and more alternatives to JSON in the future:

Scalable blockchains: Powering AI’s probabilistic future

Here’s where scalable blockchains come in—and they’re not the only players, but they’re a standout example. Blockchains like BSV, designed for enterprise-grade scalability, micropayments, and low fees, are unlocking new ways to build AI-driven applications. Why does this matter?

AI’s probabilistic thinking thrives on real-time, decentralized data flows, and blockchains provide a tamper-evident, secure foundation for that. For instance, BSV’s ability to handle massive transaction volumes (as noted in the BSV blockchain web result) could support AI agents—like Manus—processing data across distributed networks, reducing latency and token costs while ensuring data integrity.

More importantly, it provides accountability and transparency for the speed at which AIs handle data today. In the coming years, I suspect more users will demand transparency around this (nods to “AI Ethics” circles). Right now, the hype and excitement are real, but data is leaking out all over the place.

This also ties into our shift away from JSON-heavy pipelines. Blockchains can store and manage unstructured or probabilistic data (e.g., AI inferences, user interactions) in a natively scalable way, bypassing the need for constant parsing or cleaning. Imagine Manus’ LLMs interacting with a BSV-based system to log and verify web interactions in real-time—layout data, merged cells, and all—without the overhead of traditional databases or JSON structures. Other scalable blockchains (e.g., those supporting AI infrastructure for supply chain or finance) are doing similar work, integrating AI and blockchain to create tamper-evident, efficient app ecosystems.

This is a significant cultural shift, reflecting how we’re rethinking data for AI. Just as LLMs are settling and Model Context Protocols (MCPs) are trending, scalable blockchains signal that we should build apps that leverage decentralized, probabilistic systems, not centralized, deterministic ones. It’s another guardrail for us to consider: Values of trust, transparency, and scale baked into the very core of our applications.

Manus: Innovating with LLMs, but under the hood

This brings me to Manus, an innovative new (Chinese) player in the AI space. As Yichao “Peak” Ji mentioned on X, Manus isn’t using the MCP from Anthropic but instead draws inspiration from approaches like CodeAct, focusing on coding as a universal problem-solving method for LLMs.

Manus’ approach—using browser-based extraction over text-only tools like Firecrawl—shows how AI can retain the layout and structural info, reducing context length and enabling complex operations. But under the hood, Manus still leans on LLMs like Claude, tapping into their probabilistic power.This is another signal I’m watching closely. Over the next 12–18 months, I suspect LLMs like Claude might start to “settle”—not in terms of stagnation, but in reaching a maturity where their core capabilities stabilize. We’re already seeing diminishing returns on scaling model size, and the focus is shifting to fine-tuning, integration, and building on top. Enter MCPs.

MCPs are currently trending, and they standardize how AI models/agents interact with application programming interfaces (APIs). Manus’ choice to sidestep MCPs for now is interesting, but it hints at a future where these protocols, layered on top of settled LLMs and scalable blockchains, drive the next wave of innovation—think real-time, dynamic app-building

powered by AI’s probabilistic reasoning and blockchain’s scalability.

Building apps for probabilistic AI: A call to action

So, what does this mean for how we program and build applications? We need to rethink our guardrails—those beliefs, cultures, and values that shape human probabilistic thinking—and apply them to AI-driven development. We should design apps that embrace uncertainty, context, and inference instead of forcing AI into deterministic boxes (like heavy JSON parsing).

This might mean:

- Using XML or similar structured formats, not as endpoints but as inputs, AI can reason over and potentially store them on scalable blockchains for tamper-proof, real-time access.

- Prioritizing tools and frameworks that leverage LLMs’ strengths, like Manus’ browser-based approach, over legacy data-cleaning pipelines integrated with blockchain for scalability.

- Preparing for a post-LLM world where MCPs and other protocols build on settled models and blockchains, enabling richer, more dynamic applications that balance AI’s messiness with blockchain’s structure.

It’s a cultural shift, sure, but also a technical one. Just as Google’s intent-based search required us to rethink information access, AI’s probabilistic thinking and scalable blockchains tell us to rethink how we code. Manus is leading the charge at the time of this article, but it’s just the beginning—more innovations will pile on as LLMs stabilize, protocols like MCPs take center stage, and blockchains power the “decentralized,” probabilistic apps of tomorrow.

I understand the comfort of structured data and human-readable formats, but for AI and blockchain now, it’s time to trust their ability to handle chaos better than humans and build apps that reflect how we—and our machines—really think.

In order for artificial intelligence (AI) to work right within the law and thrive in the face of growing challenges, it needs to integrate an enterprise blockchain system that ensures data input quality and ownership—allowing it to keep data safe while also guaranteeing the immutability of data. Check out CoinGeek’s coverage on this emerging tech to learn more why Enterprise blockchain will be the backbone of AI.

Watch: Transformative AI applications are coming

03-26-2026

03-26-2026